If I may weigh in on this subject...

I did a lot of calculations to decide the feasibility of my own project and came to the conclusion that engines are freaking slow. A pretty standard microprocessor can do thousands of calculations in the time it takes the engine to do one revolution, it can execute as much code as you could realistically want. But with that said, I do think that an OS is overkill for this, because that's not how microcontrollers were meant to be used. The added bonus to simplifying and shortening the code is that there are less things to go wrong.

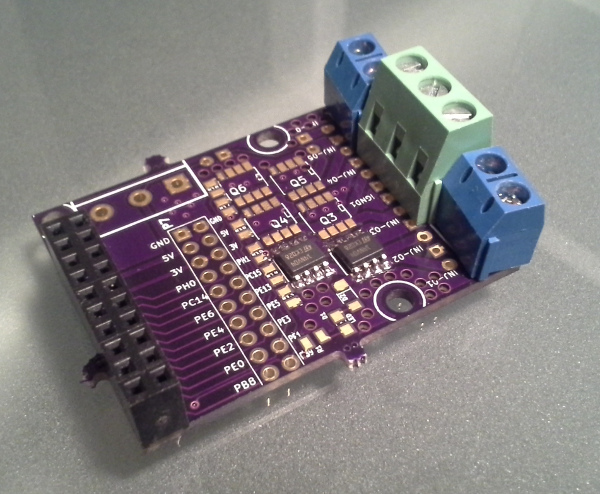

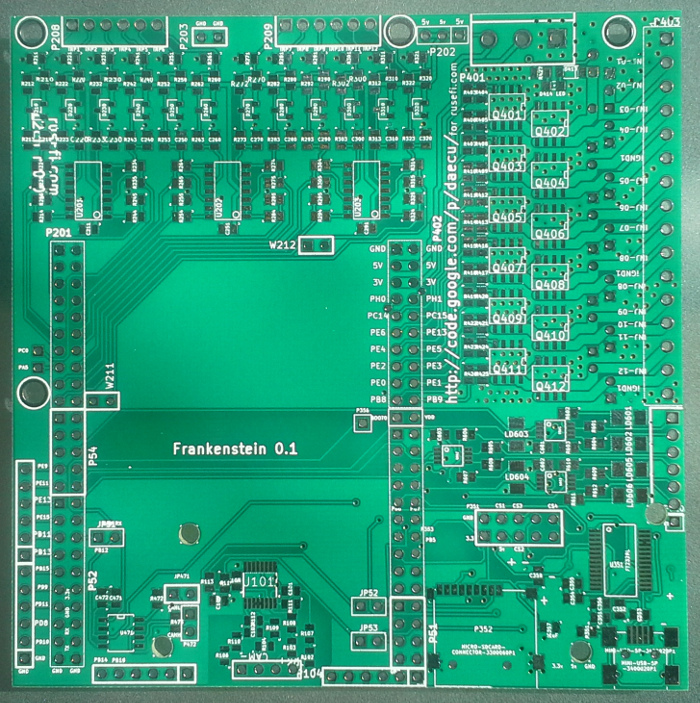

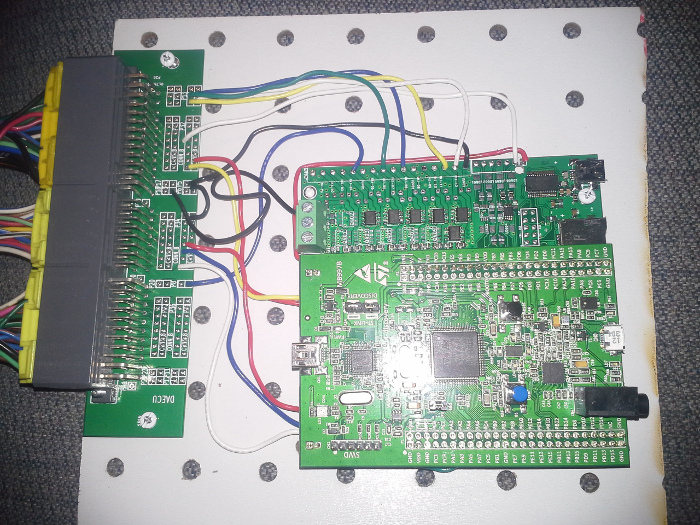

My solution was to offload the actual control of everything to hardware routines so that I can run the calculations needed in the down-time, but the timing and control is always at the top of the priority list and will always happen exactly as it needs to. The nice thing about this system is that I can update the values on the fly without actually interrupting the hardware routines, which means that it will never come to a conflicting conclusion while running. I was actually planning on removing even more from software in my system.

The sentiment of tight code in a microcontroller application is one that needs to be heeded though, because any discrepencies, memory leaks, or timing issues will quickly add up when you are doing the routines hundreds of thousands of times.

It's hard to tell if those concerns are actually real until any system gets fully tested though.